Researchers from KU Leuven, Materialise, and Iristick have developed a CAD-based vision system that can identify 3D printed parts without retraining the AI model.

Researchers from KU Leuven, Materialise, and Iristick have proposed a method for classifying new 3D printed objects without retraining a vision model each time a new part enters production.

The Problem: Part Identification in Additive Manufacturing

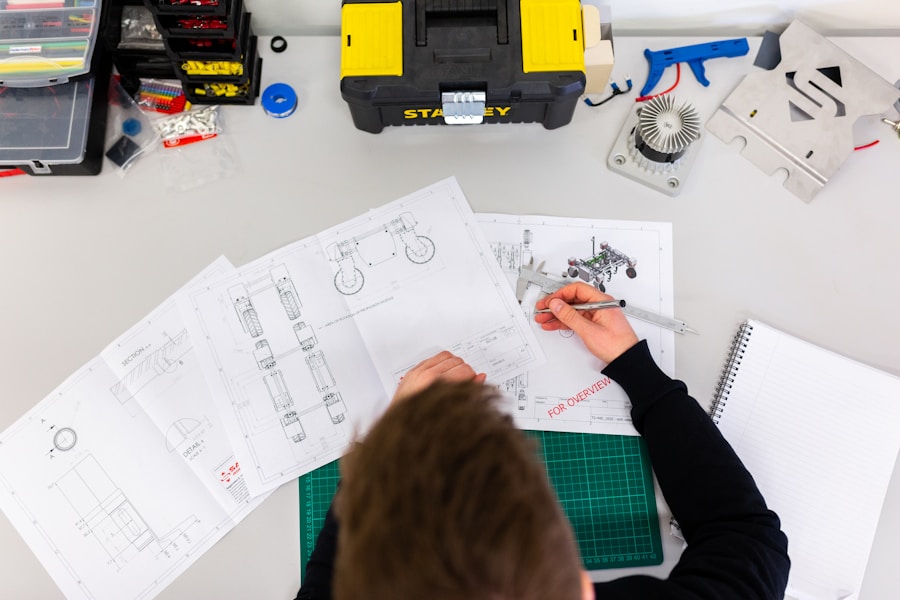

Post-production identification remains a practical problem in additive manufacturing because multiple parts are often produced in a single build and later collected together for downstream handling. Once placed into a shared bin, parts can lose their association with the original digital files, leaving technicians to sort and identify them manually.

CAD-Powered Identification

The new system uses CAD models to identify printed parts after fabrication, addressing a step that remains largely manual in AM workflows. In the described workflow, a worker wearing smart glasses picks up an object, captures an image, and receives identification support from a vision model.

Instead of retraining a model whenever new parts are introduced, the approach builds a prototype representation for each object using rendered views and compares real photographs against those prototypes at inference time. This removes the need to retrain when new objects are introduced, as long as their CAD models are available.

How It Works

The classification process uses a prototype-based approach in which each object is represented by a feature vector derived from multiple rendered views of its CAD model. During inference, a captured image is encoded into the same feature space and compared against these prototype representations using cosine similarity, with the closest match determining the predicted class.

This design decouples the model from any fixed set of object categories, allowing it to operate on arbitrary collections of parts without additional training, provided that corresponding CAD models are available.

ThingiPrint Dataset

The researchers also introduced ThingiPrint, a public dataset pairing CAD models with photographs of their 3D printed counterparts. The dataset includes:

- 100 models from Thingi10K, fabricated with a Sindoh S100 industrial SLS system using white PA12 powder

- Approximately 10 photographs per object captured using smart glasses while being rotated by hand

- 20 additional objects reprinted on a Prusa MK4 using white PLA filament

Impressive Results

Among pretrained models tested, DINOv2 achieved 61.8% top-1 accuracy, compared with 35.9% for ResNet50 and 27.1% for CLIP. After fine-tuning, the DINOv2-based model reached 76.5% top-1 accuracy and 94.0% top-5 accuracy.

The training procedure used a contrastive objective designed to produce rotation-invariant representations, ensuring that different views of the same object map to similar features.

Industry Impact

This research could significantly streamline manufacturing workflows by automating part identification, reducing manual sorting errors, and enabling faster quality control in 3D printing operations.

Comments (0)

No comments yet. Be the first!

Leave a Comment